Just as you thought we wouldn’t remind you about the RS2 and why you need 1 (or 2) in your life, there is more!

Today I teamed up with my “close-by” EUREF NTRIP service in Warnemunde, Germany, a “mere” 130 km away from my test-ground.

The purpose this time was to test the relative accuracy of the RS2 over a baseline over twice as a long as the maximum recommended 60 km.

So overall plan was the following:

- Start RS2 on rover pole, and verify Mobile connection to NTRIP and start logging raw+corrections

- Start charging batteries

- Place GCP’s

- Survey GCP’s in Reachview

- Fly mapping mission using Pix4d Capture and a DJI Phantom 4 Pro.

- Pack down

- Get back and process using Agisoft Metashape

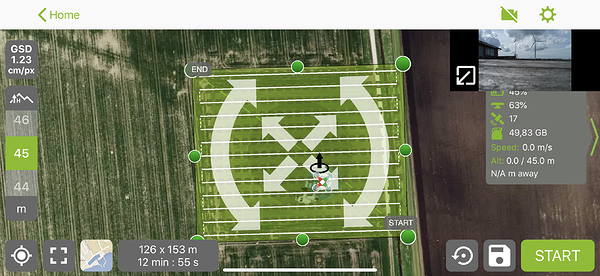

So, to begin with, this is what the mission looks like:

Using an excessive overlap of 85% on both front and side, camera pointing directly down.

Before I started, I turned on the RS2. It provided a fixed solution in roughly 1 minutes time. Pretty impressive given the long baseline, and the NTRIP only providing GPS+GLO.

Turning on the unit this early in the process gives me the opportunity to use the “Static Start”-method in RTKpost, should I choose to post-process the data.

Next was surveying the GCP’s. Quite uneventful, no broken fixes, business as usual, execpt for a 130 km baseline. 20 second collection time, could have easily went for 5 seconds, from looking at the RMS.

Here are a few shots from the process:

After successfully surveying all the point, it was time to get airborne. The mission created a total of 301 images.

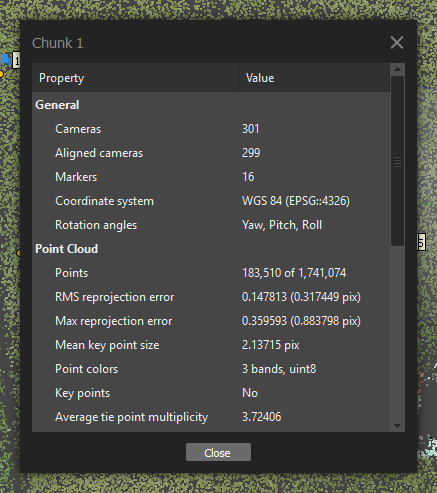

So back to the computer, I started all the photogrammetry processing using Agisoft Metashape. After cleaning up the thin-cloud and adding the GCP, this is how it looks:

General statistics:

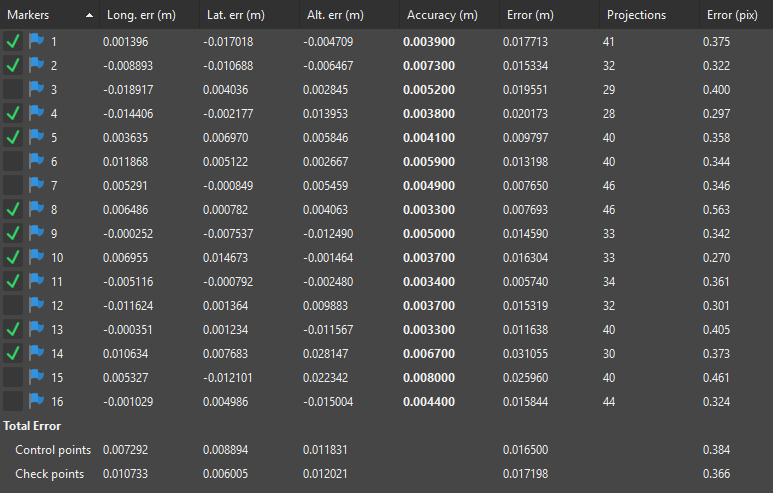

And now, what really counts for RS2, the GCP error! Average Control point RMS of 0.0165 m and Average Check point RMS of 0.0172 m. Even with a 100 meter baseline, that would have been acceptable to most!

Here are all the numbers:

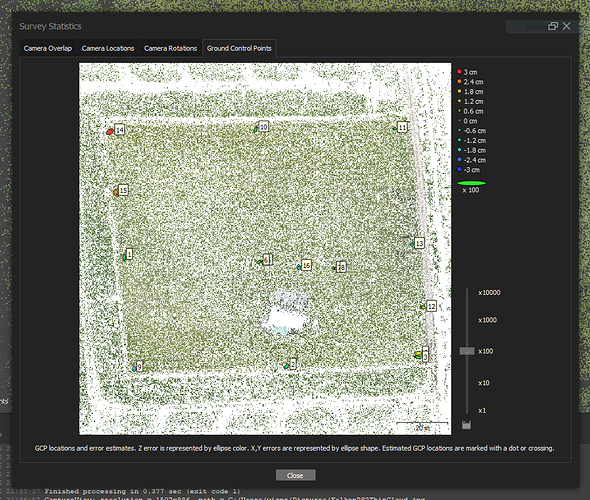

Here is a more graphical view, at 100x error magnification:

All GCP’s are pulled directly from Reachview, so all RTK-data.

I might also postprocess at a later stage, when I get the time.

Thank you for taking your time to read through the post !