I placed my rover(m+) on the ground,and used RS+ as base station.

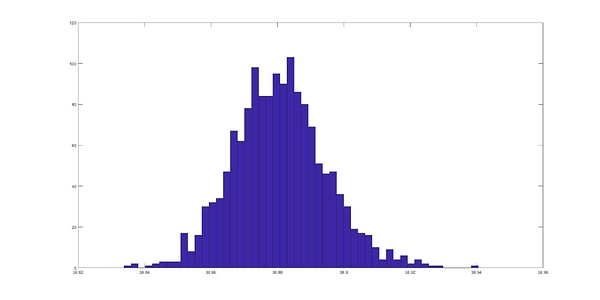

After ppk analyze,i found my data presented by gaussian distruibution.

My answer is if i record video with UAV ,how can i know the accurate position every times

Hi @qzmp69375,

The accurate Reach M+ position can be transmitted to the UAV autopilot if it supports ERB or NMEA format. You can connect the autopilot with Reach M+ over a serial port and configure Position output in the ReachView app.

However, I’m afraid that a video can’t be geotagged with the Reach position data. May I ask you to tell me more about your application so that I can think of the appropriate solution?

No my kind of expertise, but I guess you try to find an accurrate offset of the video related to GPS-time. And that’ll probably also depend on your camera hardware, delay of the video-processing, if you can synchronize shutter or not…

Maybe offsets can be estimated/calibrated by placing the camera-setup on a rotating platform in controlled environment, and measuring angle in image with a generated pulse when the platform passes a reference point.

Thanks for every answers.

I want to record video by dji mavic 2 pro equipped with m+ to get accurate position of UAV everytime.

I assumed the m+ data can correct drift of UAV ,so i can use it to image processing.

The problem is even though the device still on the ground,the random error is about 5cm in height data.So i can’t sure whether UAV drift or not?

Following picture is my set on UAV,do i have another solution?

Hi @qzmp69375,

I’m afraid that DJI has a proprietary ecosystem, so there’s no easy way to get RTK Reach position on DJI drone’s autopilot. You can log data on Reach and get the precise position after the flight, but in this case, you will not be able to use it for navigation.

Usually, the drone position can be found with a few meters accuracy. Reach M+ working in RTK or PPK can determine the position with a few centimeters accuracy. The 5 cm drift is within a standard deviation, so it should not be an issue.

I haven’t done it myself, but someone here used geotagged video from a DJI drone in Pix4D and was able to build a model from it (I saw the result myself). From what I understand, Pix4D has a utility to split video frames into individual images and interpolate the missing positions from the drone’s internal geotagging. If it is possible to replace the internal GNSS with the M+ output, then I think this is possible.

What if I use some filter to GNSS data is a possible way to make data smoother?

Hi @qzmp69375,

You can select which constellations to include in the calculation, set the Elevation mask angle, and SNR mask in RTKPost options. The elevation mask filters the satellites, which can hide behind trees or buildings and give the unstable signal. The SNR mask filters the noisy signals.

However, we don’t provide the GNSS data smoothing options, as they may distort the result.

This topic was automatically closed 100 days after the last reply. New replies are no longer allowed.